A massive component in making critical business decisions is finding the correct information at the right time.

For most research processes, this will inevitably involve performing a keyword or company search (often in combination) to uncover related documents and content to source critical insights. One of the unique aspects of AlphaSense is the ability for users to quickly and accurately mine millions of pieces of content and have the most relevant sources instantly surfaced to the user.

So, how does AlphaSense do this?

The platform simultaneously ingests and searches through terabytes of historical and current content, quickly surfacing the most relevant information in the process. In short, the answer lies in how we power our indexing and search technology – with Apache Solr.

The power of Apache Solr

For those not familiar with Apache Solr, it is an open-source platform that is highly reliable, scalable, and fault-tolerant. The team here at AlphaSense has been working with Apache Solr for over a decade, modifying and improving the platform specifically to help our clients answer their critical business and financial decisions.

Apache Solr has taken multiple evolutionary steps in the scalability of technology over the years, evolving from one static Solr core server to a scalable, fault-tolerant, multi-cloud Solr cluster with data replication.

And our team mirrored this journey, starting with a single-core deployment many years ago and eventually progressing to multi-SolrCloud deployments running on Kubernetes.

While the Apache Solr platform provides general scalability, one key aspect for the AlphaSense platform to work was still missing: managing the ever-growing content universe and improving the speed and quality of the search experience for users (hence the need for more Solr collections.)

AlphaSense continuously curates data from over 10,000 sources and millions of pieces of content. Both are constantly experiencing accelerated growth. As the number of sources and content continues to grow, this, in turn, increases pressure on the search experience, particularly the ability of AlphaSense to index and surface content quickly. In other words – there is an ever-growing need to support additional Solr clusters (i.e., content collections or sources). Not to mention, the desire to increase the elasticity of these clusters to maintain and improve fast and reliable search remains ever-present.

At the base level, there were three key capabilities needed to meet the challenge of a growing content universe:

- Continuous search index rebalancing

- Dynamic scaling of the SolrCloud cluster

- Management of time series data by specific content sources

Our solution?

Meet AlphaSense SolrCloud Operator, a proprietary service responsible for our SolrCloud management.

Building a dynamic and scalable solution

The team knew that to power search in a scalable way. They also needed to reduce human involvement and automate manual tasks related to Solr cluster operations. The goal was to build a dynamic and scalable solution.

To support this, three key objectives were identified that would allow the AlphaSense SolrCloud Operator to function:

- Automatically create, delete and replicate our SolrCloud collections

- Continuously rebalance our SolrCloud clusters based on defined strategies

- Dynamically scale our SolrCloud clusters based on real-time metrics

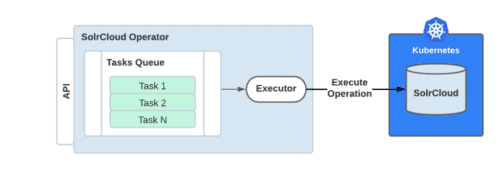

The implementation started by creating a SolrCloud Operator service base to execute tasks for the target SolrCloud cluster. Operator base works as a framework that extends with different kinds of operations. In addition, the Operator provides an API for operation status tracking and posting new tasks.

In other words, we built the connection between the Solr Clusters and any new tasks that needed to run. Initially, assignments were posted manually, but eventually, we extended Operator with a scheduled Operation poster for daily maintenance operations.

Building in timely automation

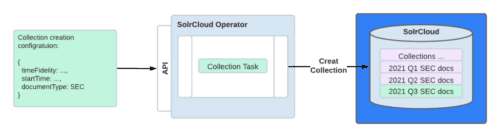

Even after adding content and sources to AlphaSense, they regularly need updating to reflect the latest content pieces. So after the Operator base was ready, we got to work on this problem.

The team needed to build an automated way to create and maintain content sets on different time series (i.e., keep Solr clusters up-to-date). The solution was to design a collection creation configuration to express what kind of Solr collections are to be created from that configuration, their time range, and how deep of history is required by that document type. This helped automate time series handling.

Always rebalancing

When content is continuously being updated and added, each individual Solr Cluster can change. Meaning the cluster can be significantly different.

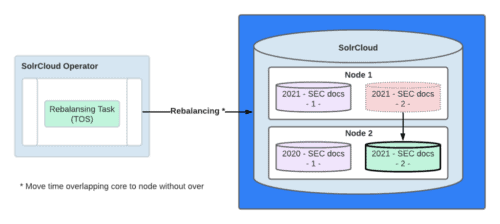

But when there were changes to the cluster, there wasn’t an in-built way to easily re-distribute Solr cores within the cluster, bringing us to the second point: rebalancing.

Apache Solr provided a set of APIs to move cores between SolrCloud nodes but did not contain a strategy to rebalance the clusters based on given criteria. The team needed this to keep our clusters stable and fast. As a result, we built two strategies for core rebalancing:

- Time overlap strategy (TOS). The basic idea here is to minimize the engagement of Solr cores at any one time. In more technical terms, the system operation tries to keep Solr cores inside a node with minimum overlap with time ranges. The idea was that user searches are limited to certain time ranges, so not all Solr cores participate in a search every time (i.e., leaving them free to be moved).

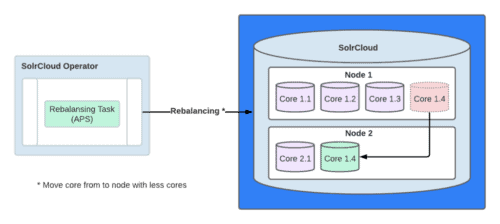

- Autoscaling policy strategy (APS). A set of rebalancing operations dynamically moves Solr cores between the nodes based on a defined configuration policy. In other words, the ability to move several Solr cores at once, but only as they are not needed for a user to perform a search.

Eventually, we settled on APS rebalancing operations based on a defined schedule because of its versatility and ability to keep the cluster balanced for performance.

Scaling in a dynamic way

The last target of the project, the ability to scale SolrCloud clusters by adding new nodes or removing them, was still missing.

Kubernetes provides good APIs for deployment manipulation, but to manage Solr clusters’ addition or removal, the team constructed a new task-type into the Operator for Solr cluster node count manipulation.

The task consists of two features, add or remove one or more nodes. Adding a node is a relatively simple operation: spin up a new Solr node into the SolrCloud cluster, and SolrCloud will register it to the cluster. However, each new node is empty after it is set up initially. Hence, we decided to use our existing rebalancing operation APS to populate a node with initial properties calculated from the existing Solr cluster.

Node removal was a more complicated task as Solr cores, which are currently located in the to-be terminated node, should be moved to other nodes before termination. We again took our APS strategy into use and extended it to rule out to-be terminated nodes from the target nodes and then execute a rebalance policy before terminating the instances.

With all three of our initial objectives met, we can confidently classify this project as a mission accomplished.

For the next step, we are working on automatic scaling of SolrCloud clusters based on performance metrics. This project aims to speed up current Solr replication being used in the core moving and rebalancing operations.

If you are obsessed with search scalability optimization like we are, join our fantastic team of talented engineers to help us build a new era of search technology.

Note: Sometime after we created the first version of the Operator, Apache Solr Operator has become an open-source alternative for Kubernetes SolrCloud management to accomplish part of the same functionality done by AlphaSense SolrCloud Operator. As AlphaSense’s proprietary solution expands and surpasses the open-source version of the Operator, we are considering a contribution to the community, which could be covered in some of our next blog posts. Stay tuned!

How AlphaSense Compares:

Factiva vs Feedly (vs AlphaSense)

Koyfin vs Bloomberg (vs AlphaSense)

PitchBook vs Crunchbase (vs AlphaSense)

PitchBook vs CB Insights (vs AlphaSense)